Agentic AI cost is the total monthly spend to run an autonomous AI agent — covering LLM token usage, orchestration infrastructure, vector databases, monitoring, governance, and engineering time. In 2026, most deployments exceeded initial budgets by 2× or more, because vendors quote the license fee and bury everything else.

The demo was four minutes long. An AI agent browsed the web, summarised a PDF, wrote a full report, sent an email, and booked a follow-up — all on its own. The pricing slide read: “Starting at $499 per month.”

You signed. Three months later, your cloud billing dashboard looks like someone left a fire hose running inside a swimming pool.

Here is the uncomfortable truth — this is not a billing glitch. This is the standard agentic AI sales cycle in 2026, playing out the exact same way for thousands of builders, founders, and tech enthusiasts every single month. The gap between the demo and the invoice is not an accident. It is architecture. It is a billing engine that was designed into the bones of how these systems work — and nobody puts it on the slide deck.

This is the forensic audit they didn’t want you to read. We are opening every hidden cost layer, naming the exact mechanisms inflating your bill, and documenting what real people discovered on the internet after the invoices landed. Then — because a real audit gives you the fix — we hand you the god-mode bypass: five technical moves that cut your spend by 60 to 80 percent without sacrificing any output quality.

→ But first, you need to understand why the billing engine exists.

The bypass only makes sense after you see the lock. Keep reading before you scroll to the fix.

Cost vs standard AI per task

One loop. 11 days. Real bill.

Max savings with the bypass

Hidden layers in every deployment

Part I — The Setup

The Big Tech Lie — What They’re Actually Selling You

Every agentic AI vendor is telling you a variation of the same sentence right now:

“Pay only for what you use. Our agents are efficient, modular, and transparent.”

— Every agentic AI pitch deck, 2024–2026

That sentence is technically true. It is also forensically misleading. “What you use” in an agentic system means something completely different from any other software subscription you have ever signed.

A standard AI chatbot processes your message once and returns an answer. One call. One bill. An agentic AI system doesn’t do that. It takes your instruction and thinks out loud, in a loop. It plans. It picks a tool. It calls the tool. It reads the result. It decides if the result is good enough. If not — it tries again. It may spin up sub-agents. Each of those steps is a separate API call. Every call burns tokens. And unlike a chatbot, where things stop when you stop typing, an agent keeps running while you’re asleep.

Where a standard AI inference costs roughly $0.001 per call, a complex agentic decision cycle costs $0.10 – $1.00. That is a 100× to 1,000× gap. At production volume over 30 days, that is not a rounding error. It is the entire budget.

↑ Every loop cycle appends to the context window. By iteration 10 on the same task, you’re burning 20× what you started with.

🔒 The Kernel-Level Lock — Why Agents Are Structurally Expensive

Modern AI agents run on what engineers call the ReAct pattern — Reason, Act, Observe. Here is what each step costs you on every single pass through the loop.

The Agent Thinks About the Current State

The full system prompt, all tool descriptions, and the entire conversation history are sent with every reasoning call. Even on step 2 of a 10-step task, you are paying for everything that happened before it.

The Agent Calls a Tool or Sub-Agent

This burns tokens plus external compute. The result — which might be 3,000 words of scraped page content — is then appended to the context window before the next step.

The Agent Reads the Result and Reasons Again

Now the context is longer. The next call processes everything before it. A loop starting at 2,000 tokens can be consuming 40,000 tokens per call by iteration 10 — on the exact same task. This compounding is not a bug. It is the design.

This is what the billing engine runs on. Now let’s audit every layer on top of it.

Part II — The Full Breakdown

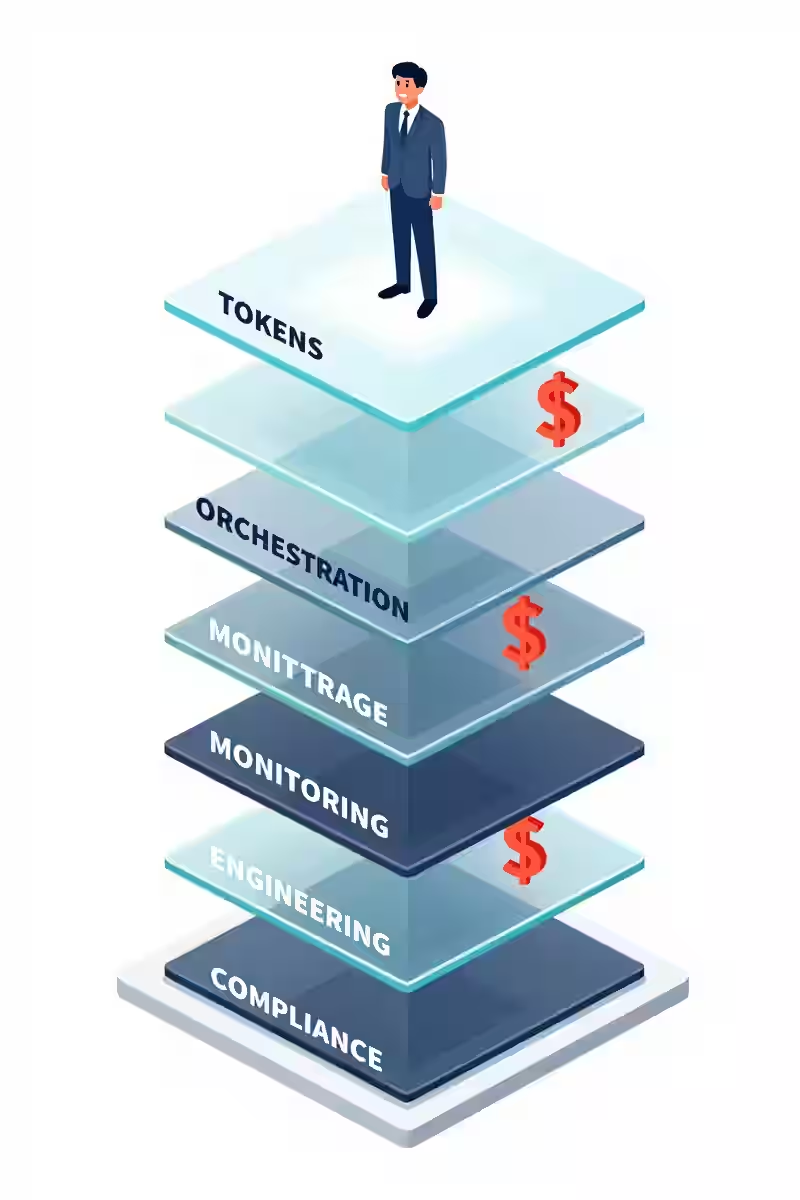

The 6-Layer Cost Audit Nobody Runs Before Signing

Most people hear “AI agent cost” and think: API fees. That is Layer 1. There are five more — and together they are what turns a $499 line item into a $2,500 or $40,000 per-month reality. Here is every layer, opened up and priced honestly.

↑ The vendor shows you the top shelf. You pay for all six.

LLM Token Costs — The Visible Layer

The only layer the vendor shows you. Also the most misleading one — because the per-token price looks harmless in isolation.

| Model | Input / 1M Tokens | Output / 1M Tokens | Agentic Risk |

|---|---|---|---|

| GPT-4o | ~$2.50 | ~$10.00 | 🔴 Very High |

| Claude Sonnet 3.7 | ~$3.00 | ~$15.00 | 🔴 Very High |

| Claude Haiku 3.5 | ~$0.80 | ~$4.00 | 🟡 Medium |

| Gemini 1.5 Flash | ~$0.075 | ~$0.30 | 🟢 Low |

| Llama 3.3 70B (hosted) | ~$0.23 | ~$0.40 | 🟢 Low |

Those prices look manageable until you understand that a typical unoptimised multi-agent pipeline burns 10–50× more tokens than necessary — the full system prompt resent every call, all tool descriptions included regardless of relevance, memory appended rather than summarised, every task routed to the most expensive model by default.

Real example: one startup’s support agent burned 1.4 million tokens per day for 200 support tickets — $315/month. An optimised equivalent? Under $30. Same quality. Ten times the cost.

And then there is the hidden Layer 1 bomb: the retry storm. One documented case — a LangChain agent misread a rate-limit error as “try again,” ran for 11 days with no kill switch, and generated a $47,000 bill the team mistook for organic traffic growth.

Orchestration Infrastructure

| Component | Monthly Cost Range |

|---|---|

| Cloud compute — agent runtime | $200 – $2,000 |

| Task queuing — Redis, SQS | $50 – $400 |

| Workflow orchestration — Temporal, Prefect | $150 – $800 |

| Agent hosting platform | $100 – $500 |

| Layer 2 Subtotal | $500 – $3,700 / month |

None of this appears on any vendor pricing page. It is pure infrastructure and it scales with task volume independently of your LLM fees. This is the layer that ambushes mid-size teams between months two and four.

Vector Database & Memory

| Provider | Free Tier | Production / Month |

|---|---|---|

| Pinecone | 2 GB / 100K queries | $70 – $2,500 |

| Weaviate Cloud | Limited sandbox | $250 – $1,500 |

| Chroma (self-hosted) | Free locally | Server costs apply |

| pgvector on Postgres | Varies | $50 – $800 |

The trap activates when your agent does semantic search at scale — querying a 500,000-document corpus dozens of times per user request. The query volume stacks silently while you focus entirely on the LLM spend line item.

Monitoring & Observability

The layer almost every first-time builder skips — until something breaks and they have no idea why. And something always breaks. Without observability you cannot see which calls are expensive, where loops start, or when quality silently degrades.

| Tool | Purpose | Monthly Cost |

|---|---|---|

| LangSmith / Langfuse | Trace runs, debug calls | $200 – $800 |

| Helicone | LLM cost tracking & caching | $100 – $400 |

| Datadog / New Relic | Infrastructure monitoring | $200 – $1,000 |

| Layer 4 Subtotal | $500 – $2,200 / month |

Skipping this does not save money. It costs money — because you cannot see the fire until it has already burned for days.

Integration & Engineering Maintenance

| Task | Hrs / Month | Cost at $100/hr |

|---|---|---|

| Integration maintenance & repair | 10 – 30 hrs | $1,000 – $3,000 |

| Prompt engineering & tuning | 8 – 20 hrs | $800 – $2,000 |

| Error triage & debugging | 5 – 15 hrs | $500 – $1,500 |

| Layer 5 Subtotal | $2,300 – $6,500 / month |

Every external integration breaks eventually. APIs change. Tokens expire. Rate limits shift. At the 3–6 month mark, when the pilot enthusiasm fades, the real maintenance cadence begins — and this layer hits hardest.

Compliance, Governance & Safety

| Requirement | Annual Cost |

|---|---|

| Data residency & sovereignty compliance | $10,000 – $50,000 |

| AI audit trail, logging & explainability | $5,000 – $25,000 |

| Red-teaming & safety evaluation | $15,000 – $60,000 |

| Legal review & vendor contracts | $5,000 – $20,000 |

| Total Annual Overhead | $35,000 – $155,000 / year |

Gartner projects over 40% of agentic AI projects will be cancelled before production. Compliance cost surprise is consistently in the top three cited reasons.

📊 The Monthly Reality Check — Optimised vs. Not

Solo builder: $400 – $1,200

Small team: $3,000 – $9,000

Mid-size: $12,000 – $40,000

Enterprise: $100,000 – $400,000+

Solo builder: $70 – $200

Small team: $800 – $2,500

Mid-size: $3,000 – $8,000

Enterprise: $20,000 – $80,000

Part III — Community Evidence

The Rage Files — Real People, Real Bills

What follows is not opinion. It is forensic documentation of patterns that surfaced consistently across developer and AI communities in early 2026. Three clusters appeared with enough frequency to be treated as data — not anecdotes.

↑ This is the face of finding a $3,600 invoice at 2am. Happening to real builders every week.

🔴 “The Invisible Bill”

The most common complaint: users finding large bills by accidentally checking their billing dashboard. Not through an alert. Not through a notification. By chance.

“I had absolutely no idea which conversations cost what until I saw the invoice.”

“I said usage-based and genuinely thought it would scale down when I wasn’t actively using it. It does not scale down.”

“I closed the app. The agent kept running in the background for three more days.”

One fully documented case: a developer’s personal research agent ran for 30 days. When the credit card charged $3,600, investigation found 180 million tokens consumed — re-processing the same documents on repeat because no deduplication logic existed.

🔍 FORENSIC FINDING: Most agentic platforms default to zero spend alerts, zero hard caps, zero auto-pause. These must all be manually configured. They are OFF by default because turning them ON by default reduces platform billing revenue.

🔴 “The Infinite Loop Tax”

The $47,000 LangChain loop story became the single most-shared cautionary tale of early 2026. But it was far from unique. A separate incident: a product research pipeline had one sub-agent checking competitor pricing pages. The target site returned inconsistent HTML. The agent read it as “data not found.” Its instruction was: if data not found, retry. No maximum. No backoff. It polled the same URL every 90 seconds for eight straight days.

“The result: $23,000 in API fees, a blocked IP address, and a legal notice from the competitor’s hosting provider.”

A third incident: a data enrichment agent consumed $180,000 in API credits over a single weekend from a malformed JSON response triggering an infinite retry cascade. Discovered Monday morning.

🔍 FORENSIC FINDING: Agents are given goals. They are not given budgets. They are optimised for task completion — not cost efficiency. Until cost-awareness is enforced as hard infrastructure, the agent will spend whatever it takes to finish the goal.

🔴 “The License Lie”

Threads titled “what my $499/month AI tool actually costs” now regularly accumulate hundreds of comments. The pattern is structural, not accidental. One small business owner posted this breakdown after six months of real deployment:

Vendor license fee: $499/month

AWS compute for agent runtime: $380/month

Pinecone vector database: $420/month

LangSmith monitoring: $250/month

Integration contractor (8 hrs): $960/month

Total actual cost: $2,509/month

“I was sold a $499/month solution. I am running a $2,500/month operation. Nobody lied to me technically. But nobody told me the truth either.”

🔍 FORENSIC FINDING: The advertised price covers Layer 1 at best. Layers 2 through 6 are entirely the buyer’s problem — and they are never mentioned during the sales process.

Part IV — The Fix

The Bypass — God-Mode Cost Control

Not theory. Not sympathy. The exact five technical moves that shift agentic AI from unpredictable liability to fully controlled infrastructure. Apply all five — they compound on each other.

↑ Five switches. All green. This is what a controlled agentic AI deployment looks like — 60–80% cheaper than default.

Two-Tier Model Routing

Not every task needs a frontier model. Routing 70–80% of operations to a cheap, fast model and reserving the expensive one only for genuine reasoning work changes the economics completely.

// Route by task type — not by habit EXPENSIVE (GPT-4o / Claude Sonnet): → Complex multi-step reasoning → Final output synthesis & editing → Ambiguous edge cases CHEAP (Gemini Flash / Claude Haiku / Llama 70B): → Classification & routing → Data extraction → Format conversion → Yes/no condition checks → Summarising completed steps

Real result: before routing, $180/month with all tasks to GPT-4o. After routing, $70/month with 80% on Claude Haiku. Quality difference on routed tasks: negligible. That is a 61% cost reduction from one architectural decision.

Prompt Caching

Anthropic’s Claude API and several other providers cache repeated prompt sections server-side and bill them at up to 90% less than normal input token pricing. Most pipelines are not using this. Every call is sending the full 3,000–8,000 token system prompt as if it’s brand new.

// Anthropic API — mark static sections as cached { "type": "text", "text": "[Your full static system prompt]", "cache_control": { "type": "ephemeral" } }

Combined with Bypass 01, prompt caching reduces total token spend by 50–70% on most production pipelines. Highest ratio of impact to effort of any change you can make.

Hard Budget Circuit Breakers

“Do not spend too much” in a system prompt is advice. A circuit breaker is a stop. Enforce these at the infrastructure level — not in a prompt.

- Per-run token ceiling — if hit, the agent returns partial results and logs a warning. It does not retry.

- Daily spend cap — set a hard daily dollar limit inside your provider’s billing settings. Anthropic, OpenAI, and Google all offer this natively. Almost nobody configures it.

- Retry logic: max 3, with backoff — 1s → 2s → 4s → stop. No unlimited retries under any circumstances.

- Dead man’s switch — check every 15 minutes that the agent is making forward progress. If the same step repeats N times with no state change, pause the agent and fire an alert. This is the kill switch for every infinite loop scenario in Part III.

The $47,000 loop. The $23,000 retry storm. The $180,000 weekend. All prevented by circuit breakers that were never configured before deployment.

Context Window Hygiene

- Rolling summarisation every 5 steps — after every five observations, run a cheap-model summariser that compresses findings into 100 words. Remove the raw tool output from the live context.

- Tool injection by step only — if step 7 is “write a summary,” inject only the writing tool. Sending all 20 tool descriptions every call can waste 1,500–3,000 tokens per API request for no reason.

- Explicit output length control — “summarise in 3 bullet points, max 20 words each” costs 1/10th the tokens of “summarise this” with no constraints. Always specify format and length.

Minimum Viable Observability (Under $100/Month)

| Tool | Purpose | Cost |

|---|---|---|

| Helicone — free tier | Per-call cost tracking, latency | $0 – $50/mo |

| Langfuse — self-hosted | Full trace visibility, step debugging | $0 (server only) |

| Provider budget alerts | Hard stop at 80% of monthly limit | Free |

| Daily cost webhook | Automated daily spend summary via email/Slack | Engineering time |

You should be able to answer these five questions in under 60 seconds at any moment: What is my spend today vs. yesterday? Which run cost most in 24hrs? What is my most expensive tool call? Is any agent in a retry loop? Am I on pace for my monthly limit? If you can’t answer all five immediately, the meter is running and you are not in control.

“Pay only for what you use” — without disclosing that a single agentic task cycles through 10–50× more tokens than a standard AI call, compounds with every iteration, and has zero default spending ceiling on how long it runs or how many times it retries.

Unoptimised agentic AI routinely costs 3–10× the advertised price, driven by ReAct loop compounding, orchestration overhead, unmonitored retry storms, and five cost layers that are never shown in any demo or pricing page.

Two-tier model routing + prompt caching + hard circuit breakers + context window hygiene + basic observability = 60–80% cost reduction with no meaningful quality loss. Implementable in one engineering sprint. The agents are not the problem. The missing controls are. Now you have the controls.

Pre-Deployment Checklist

10 Switches. All Green. Before You Go Live.

Print this. Share it with whoever is about to hit deploy. These ten items are the entire gap between a controlled deployment and a five-figure surprise at the end of the month.

- Two-tier model routing configured — expensive model handles under 30% of all calls

- Prompt caching enabled on static system prompt and tool description blocks

- Hard daily spend cap set inside your LLM provider’s billing settings — not in a prompt

- Per-run token ceiling enforced at the orchestration layer

- Retry logic has a maximum count of 3 with exponential backoff between attempts

- Dead man’s switch running every 15 minutes — auto-pause if no forward progress

- Rolling summarisation active — raw tool output removed from context every 5 steps

- Tool descriptions injected by step only — not all tools in every API call

- Observability dashboard live and verified before production traffic hits the agent

- Budget alert set at 80% of your monthly spending limit

Ten checkboxes. That is the only gap between the $47K surprise and never having one.

Did this audit save you money? Share it with the person about to sign an agentic AI contract without reading the footnotes.

— The Honest Find

Tags: agentic AI, AI agent cost, hidden AI costs, LLM billing, token overrun, prompt caching, model routing, AI budget, AI infrastructure 2026